Predictive sales analytics starts with better data, not better models

Table of Contents:

Most revenue teams have invested in forecasting. They have CRM stages, weighted pipelines, and a weekly forecast call where managers ask reps how confident they feel about their deals.

And most of those forecasts are still wrong.

The problem is not the forecasting method. It is the data feeding it. Predictive sales analytics promises to fix sales forecasting accuracy by replacing gut feel with machine learning and statistical models.

That promise holds up, but only when the underlying data reflects what is happening in your deals. Not what reps remember to log after the fact.

This guide breaks down how predictive sales analytics works, what data it needs to perform, where most implementations fall short, and how to build a foundation that makes your predictions worth trusting.

TLDR

- Most sales forecasts miss because the data feeding them is incomplete, not because the model is wrong.

- Predictive sales analytics uses historical patterns, engagement signals, and conversation data to score deal probability.

- Three data layers drive accuracy: CRM fields (baseline), engagement data (behavior), and conversation data (highest signal).

- Conversation data captures what CRM fields miss: objections, decision-maker involvement, and committed next steps.

- Teams that automate data capture before investing in forecasting models see the largest accuracy gains.

- This guide covers how predictive forecasting works, what data it needs, and how to start without a data science team.

What is predictive sales analytics

Predictive sales analytics is the practice of using historical data, statistical models, and machine learning to forecast future sales outcomes. It goes beyond reporting on what happened last quarter. It identifies patterns in your pipeline, scores the probability of deals closing, and flags risks before they become missed targets.

For revenue teams, this means moving from backward-looking reports to forward-looking predictions. Instead of relying on a rep's stage-by-stage assessment of where a deal stands, predictive analytics examines the full set of signals around that deal.

How fast has the deal moved through the pipeline? How engaged is the buyer? What has been discussed on calls? Does this deal match the patterns of previous wins or losses?

This matters for RevOps teams because sales forecasting accuracy affects resource planning, hiring, territory assignments, and board-level reporting. When forecasts are off by 20-30%, every downstream decision built on those numbers carries the same margin of error.

Think of it as the difference between weather forecasting with satellite data versus looking out the window. Both produce a prediction. One is built on pattern analysis across thousands of data points. The other is built on what you can see from where you stand.

Predictive analytics for sales is not a reporting upgrade. It is a shift in how you generate the numbers your business plans around. And it is where AI sales forecasting starts to separate from the legacy approach of weighted pipeline averages and manager gut checks.

How predictive sales forecasting differs from traditional methods

Traditional sales forecasting and predictive sales forecasting answer the same question: how much revenue will we close this period? They arrive at the answer through different paths.

Traditional forecasting

Traditional forecasting relies on a set of familiar inputs. Reps assign a deal stage using techniques like opportunity stage weighting and historical analysis. Each stage carries a probability weight.

A manager reviews the pipeline, adjusts based on what they heard in the last deal review, and submits a number. The forecast reflects what the team believes will happen.

Predictive forecasting

Starts with data instead of belief. It analyzes historical deal outcomes, identifies which signals correlated with wins and losses, and scores each active deal against those patterns. The forecast reflects what the data shows is likely to happen.

The core difference comes down to what counts as evidence.

Here is what this looks like in practice. A rep marks a deal as "commit" because the prospect said they are moving forward on the last call. Traditional forecasting takes that at face value.

Predictive forecasting checks whether the deal matches the pattern of deals that close. Is a decision-maker involved? Has the deal velocity been consistent? Did the prospect raise objections that went unresolved?

When those signals conflict with the rep's assessment, predictive analytics surfaces the gap. That gap is where forecast accuracy lives.

The shift from traditional to predictive is not about replacing human judgment. It is about giving human judgment better raw material. Whether your team uses a top-down or bottom-up forecasting structure, reps and managers still make the final call, but they make it with data that reflects deal reality rather than deal optimism.

AI sales forecasting takes this a step further by learning from each deal outcome and refining its models over time. A traditional forecast made the same way for 12 months produces the same accuracy. A predictive model running for 12 months gets more accurate each quarter because it has more data to learn from.

What data powers predictive sales forecasting

Predictive models produce outputs that match the quality of their inputs. This is where most implementations run into trouble, and it is the part that seldom gets enough attention.

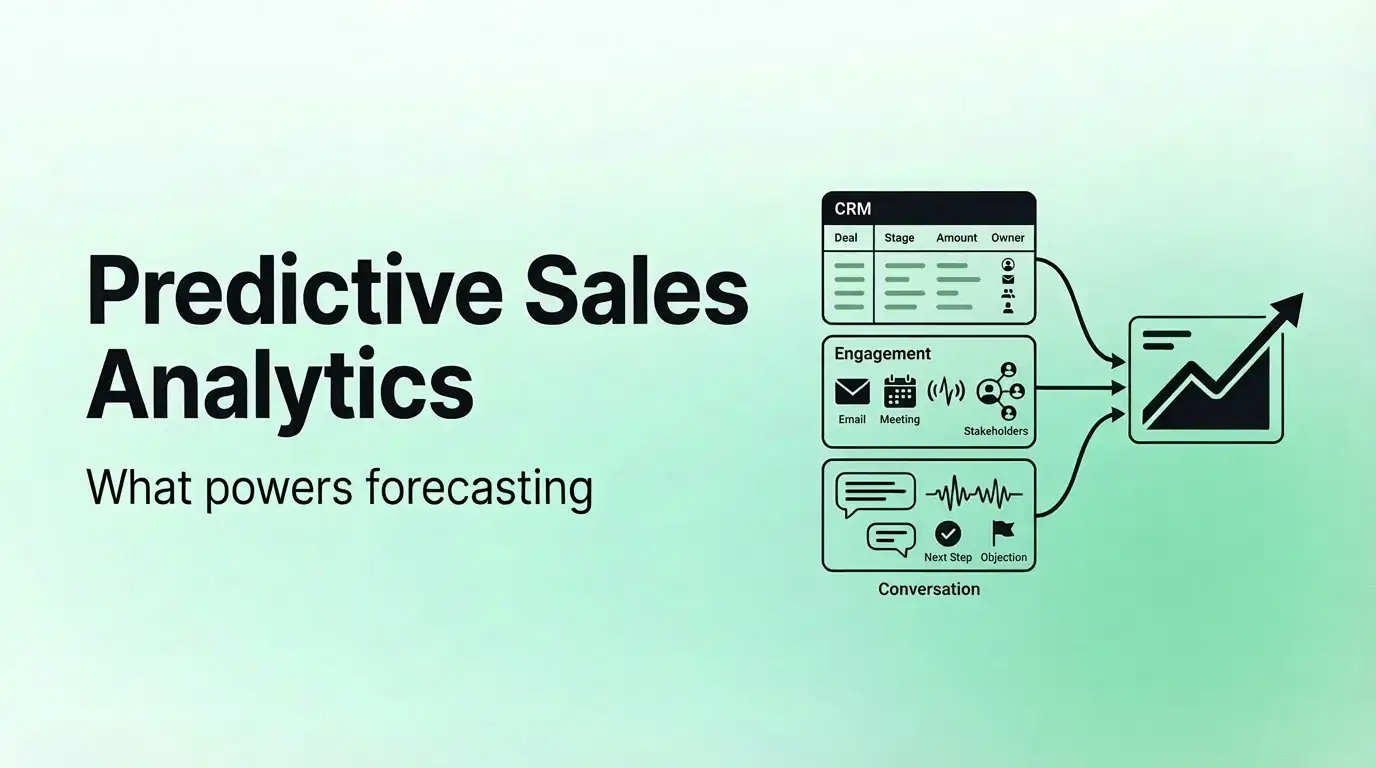

Three layers of data feed a predictive sales forecasting model, and they are not created equal.

Layer 1: CRM data

This is the baseline. Deal stage, close date, deal amount, number of contacts involved, activity count. Every forecasting model uses this data.

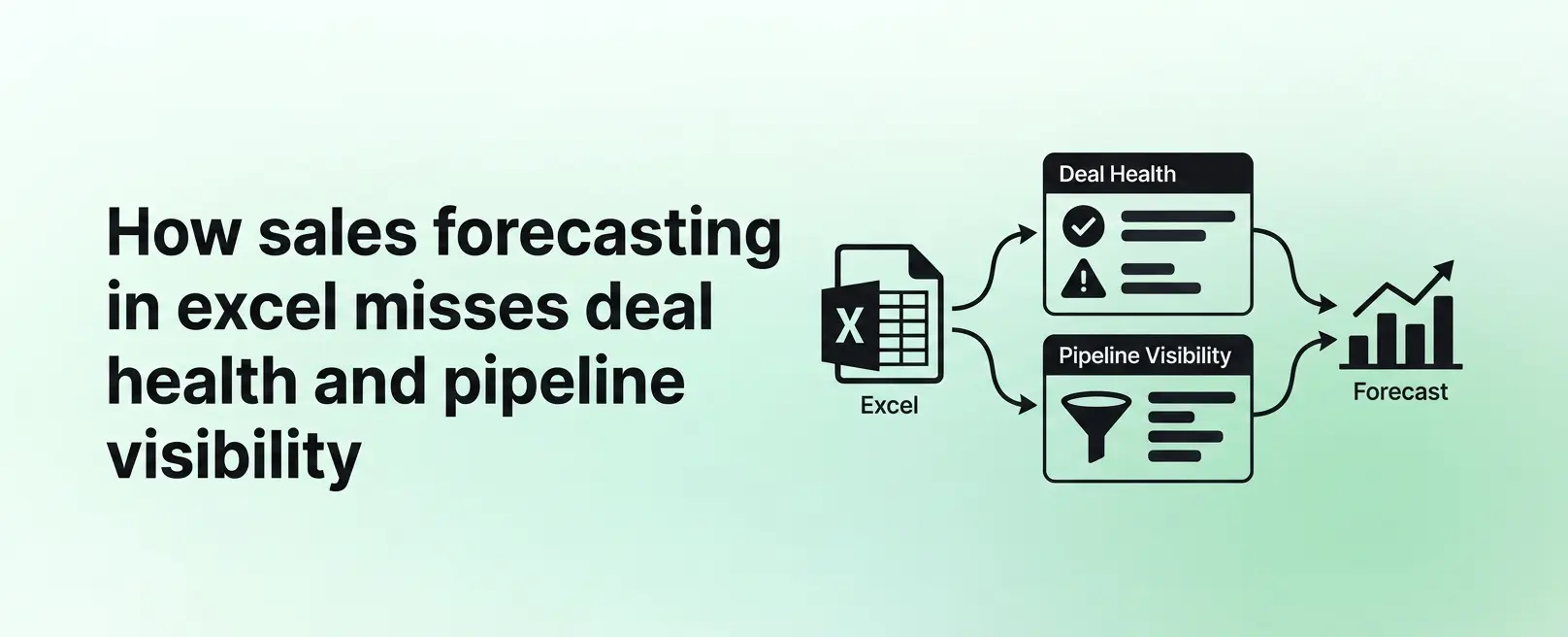

The problem is that CRM data depends on reps entering it, and reps are inconsistent. Fields go blank. Close dates get pushed without explanation.

Activity logs show a meeting happened but not what was discussed.

CRM data tells you the structure of a deal. It does not tell you the substance.

Layer 2: Engagement data

This includes email response times, meeting frequency, the number of stakeholders involved, and whether the prospect is engaging with shared content. Engagement data adds a behavior layer on top of CRM structure.

If a prospect stops responding to emails and cancels meetings, that shows up here before it shows up in a CRM stage change. This layer tracks what people do, not what reps enter. Avoma captures these buyer engagement signals and uses them to surface deals that need attention before the CRM reflects the change.

Layer 3: Conversation data

This is the highest-signal input, and the one most teams are missing. Conversation data captures what was said on calls: objections raised, questions asked, decision-makers identified, next steps committed to, pricing discussions, and competitive mentions.

Conversation data matters for forecasting because it reflects deal reality in a way that CRM fields cannot. Avoma, for example, analyzes deal activity and momentum from conversations, tracking how fast deals progress, where they stall, and what those patterns signal about risk. A rep might update the CRM to say "demo completed, moving to proposal."

The conversation data might show that the prospect asked about two competing products, raised a budget objection that went unaddressed, and the decision-maker was not on the call. Those two versions of the same deal tell very different stories.

Predictive models that include conversation data can spot the difference. Models that rely on CRM fields alone cannot.

The data hierarchy looks like this:

The completeness column matters most. Conversation data has the highest signal value, but it is the least complete in most organizations. Capturing it requires either manual note-taking (which is inconsistent) or automated systems that transcribe, summarize, and sync meeting data to the CRM.

When you automate the capture of conversation data and meeting activity, the quality of your predictive model improves at the source. You are not building predictions on what reps remembered to log. You are building them on what was said and done.

This is the foundation that separates a predictive model that works from one that produces confident-looking numbers on top of incomplete data.

How predictive analytics works in sales forecasting

Understanding the mechanics helps you evaluate whether a tool or approach will work for your team. Here is how predictive analytics works in a sales context, without the data science jargon.

Step 1: Data collection and aggregation

The model pulls data from your CRM, email systems, calendar, and (when available) conversation intelligence tools. It creates a composite view of each deal that combines structural data (stage, amount, close date) with behavioral data (engagement frequency, stakeholder count) and conversation signals (topics discussed, objections raised).

Step 2: Pattern recognition

The model analyzes your historical closed-won and closed-lost deals to find which characteristics predicted each outcome. Maybe deals that included a VP-level stakeholder by the third meeting closed at 3x the rate of deals that did not. Maybe deals where pricing came up in the first call had a lower win rate than deals where it came up in the third.

The model identifies hundreds of these patterns across your data. No human could track this many variables at once. That is where the machine learning layer earns its value.

Step 3: Probability scoring

Each active deal gets scored against those patterns. A deal that matches 80% of your historical win characteristics gets a higher probability than one that matches 40%.

The model also flags specific risk signals: a deal that is moving slower than similar deals that closed, a deal where the primary contact has gone silent, or a deal approaching close date with unresolved objections from the last call.

Some platforms take a complementary approach to scoring. Avoma qualifies and scores deals based on your sales methodology (MEDDIC, SPICED, or a custom framework) and the conversation history within each deal, so you get a qualification signal grounded in what was discussed.

Step 4: Forecast calculation

The model aggregates deal-level probabilities into a pipeline-level forecast. Instead of summing raw pipeline values using static stage probabilities, it calculates weighted amounts based on each deal's individual signal profile.

In Avoma, this shows up as a weighted amount column in the forecast deals table, so you can see the probability-adjusted value for each deal alongside its full pipeline value. The result is a forecast grounded in deal-level data, not averages.

Step 5: Continuous learning

As deals close or fall out, the model updates its patterns. A model that has been running for six months is more accurate than one running for two weeks because it has seen more outcomes in your specific selling environment.

The value here is not that the model replaces your judgment. It gives you a second opinion grounded in every deal your team has closed or lost, not just the ones you remember.

For pipeline reporting, this means your numbers carry a confidence level. You can tell your CFO that your $2M forecast is built on deal-level probability scoring, not on a manager's gut check during a Friday afternoon pipeline review.

Benefits of predictive sales forecasting for B2B teams

The benefits of predictive sales forecasting become concrete when you tie them to specific workflow changes, not abstract improvements.

- Sales forecasting accuracy improves when the data is right. Teams that move from traditional stage-weighted forecasting to predictive methods see accuracy improvements from the 60-70% range to 85% and above. That improvement depends on data quality.

A predictive model trained on incomplete CRM data will produce better-looking forecasts that are still wrong. When you add conversation and engagement data to the model, the accuracy gains hold up because the inputs reflect deal reality. - Pipeline risk surfaces before deals slip. In a traditional process, you find out a deal is at risk when the rep moves it to the next quarter or when it falls off the forecast. Predictive models flag risk signals in real time: a deal that stopped progressing, a buyer who went silent, a late-stage deal where the economic buyer has never been on a call.

In Avoma, risk scores and qualification scores (derived from real-time deal behavior) appear as columns in the forecast submission view, so managers can see deal-level risk while reviewing their commit numbers. You can intervene before the deal slips rather than explaining why it did. - Rep time goes where it matters. When every deal carries a data-driven probability score, reps and managers can prioritize with more clarity. A rep with 30 active opportunities can focus on the 10 with the highest predicted close rate and the 5 that need specific action to move forward.

That is time spent selling rather than time spent guessing where to focus. - Coaching signals emerge from deal patterns. Predictive analytics does more than forecast. It surfaces which behaviors correlate with winning.

If reps who multi-thread across three stakeholders by the second meeting win at 2x the rate, that is a coaching signal. Managers can use these patterns to build specific, data-backed coaching playbooks instead of offering generic advice.

Resource planning gets a foundation. Hiring plans, territory assignments, and capacity models all depend on forecast accuracy. When your forecast improves from a range estimate to a probability-weighted projection, the decisions you build on it carry less risk.

Finance, marketing, and customer success teams all benefit when the sales forecast is grounded in data rather than opinion. Board-level reporting becomes a conversation about confidence ranges instead of a debate about whether the number is real.

Common challenges implementing predictive sales analytics and how to address them

Predictive sales analytics often fails because the conditions around it are not set up for success.

- Incomplete CRM data. This is the most common problem. If reps do not update deal fields, close dates, and activity notes, the model trains on incomplete information. The output looks sophisticated, but the foundation is weak.

Automate data capture at the source. When meeting notes, call summaries, and CRM fields update from conversations instead of manual entry, the completeness gap closes. Tools that auto-sync meeting data to your CRM remove the dependency on rep behavior for data quality. The goal is a system where data flows into the CRM as a byproduct of doing the work, not as a separate task. - Adoption resistance from reps and managers. Teams distrust AI-generated forecasts when the model contradicts what a rep believes about their deal. If the model says a deal is at risk and the rep says it is on track, the team defaults to the rep.

Use predictive scores as a complement to rep judgment, not a replacement. Show the team specific cases where the model flagged a risk that turned out to be real. Over time, the model earns trust by being right about things the team missed.

Start with pipeline reviews where the model's risk flags are discussed alongside the rep's assessment. Frame it as a second opinion, not an override.

Recurring forecast reminders (sent via email or Slack on a set cadence) help build the submission habit so predictive data stays current. Submission history tracking adds accountability by logging who updated what and when, so the team can see how their forecast evolved over the quarter. - Limited historical data. New teams, new products, or new market segments do not have enough closed deals to train a reliable model. The model needs patterns, and patterns need volume.

Start with basic models that rely on fewer signals and layer in complexity as your data set grows. Conversation data can compensate for thin pipeline history because it provides richer per-deal signals. Ten deals with full conversation data can teach a model more than fifty deals with bare CRM entries. - Limited historical data. New teams, new products, or new market segments do not have enough closed deals to train a reliable model. The model needs patterns, and patterns need volume.

Start with basic models that rely on fewer signals and layer in complexity as your data set grows. Conversation data can compensate for thin pipeline history because it provides richer per-deal signals. Ten deals with full conversation data can teach a model more than fifty deals with bare CRM entries. Methodology-based scoring tools like Avoma work well here because they evaluate each deal against your defined qualification criteria and that deal's own conversation history, rather than requiring a large volume of past outcomes to train against. - Treating model output as certainty. A predictive model produces probabilities, not guarantees. A deal scored at 80% still has a 20% chance of not closing. Teams that treat predictions as commitments build fragile forecasts.

Report forecasts with confidence ranges, not single numbers. Use the model's output to identify where human judgment should override the prediction. The goal is a forecast that is more accurate than gut feel, not one that is infallible.

How to get started with predictive sales forecasting

You do not need a data science team or a six-month implementation to start with predictive sales forecasting. You need a clear sequence and a realistic view of your data.

Step 1: Audit your data

Before you evaluate any tool or model, look at what your team captures today. Pull a sample of few recent deals and check: How many have complete CRM data? How many have meeting notes attached? How many have conversation data from calls?

The gaps in that sample are the gaps your predictive model will inherit. If 60% of deals are missing contact roles or have no call notes, that is your starting line.

Step 2: Automate data capture

This is the step most teams skip, and it is the one that matters most. If your CRM data depends on reps updating fields after every call, your data will always be inconsistent.

Automate meeting notes, CRM sync, and call logging so your data reflects what happened, not what someone remembered to enter. This single step changes the quality of every model you build on top of it.

Step 3: Define your model inputs

Decide which signals you want your predictive model to consider. Start with the basics: deal stage, deal age, amount, stakeholder count, and activity volume. Then layer in engagement signals and conversation data as your automated capture matures.

You do not need every signal on day one. Start with what you have and expand as the data gets richer.

Step 4: Run a pilot

Apply predictive scoring to a segment of your pipeline for one quarter. Compare the model's predictions against your traditional forecast. Track where they align and where they diverge.

The divergence points are where you learn the most about your process. When the model and the team disagree, go back to the conversation data and find out which one was right.

Step 5: Iterate based on outcomes

After the pilot, review which deals the model got right that the team got wrong, and vice versa. Adjust the model inputs and expand the scope.

Add new data sources as they become available.

Predictive forecasting is not a one-time setup. It improves as your data grows and your team learns to use it alongside their own judgment.

The first step is not choosing a tool. It is making sure the data your tool needs exists and updates without depending on rep behavior.

Conclusion

Predictive sales analytics changes how revenue teams forecast by replacing subjective assessment with data-driven probability scoring. The models work. The math works. The place where most teams stumble is upstream: the data feeding the model.

If your CRM fields depend on reps updating them after every call, your data will always have gaps. If your forecasting model has never seen what was said on a call, it is working with an incomplete picture of every deal in your pipeline.

The teams that get the most from predictive sales forecasting are the ones that fix the data layer first. They automate meeting notes, sync conversation data to their CRM, and build their predictions on what happened in deals rather than what someone remembered to log.

Sales forecasting accuracy is not a model problem. It is a data problem. Start there, and the model will follow.

Frequently Asked Questions

What's stopping you from turning every conversation into actionable insights?